According to new research from Pew, artificial intelligence is firmly part of everyday teen life. The study, based on a nationally representative survey of US teens and their parents, shows that AI chatbots are now a routine tool for learning, entertainment and sometimes emotional support.

For parents, teachers and those in online safety, the most important takeaway from the study is not that teens are using AI – it’s how quickly these tools have become embedded in the environments where children learn, socialise and seek help.

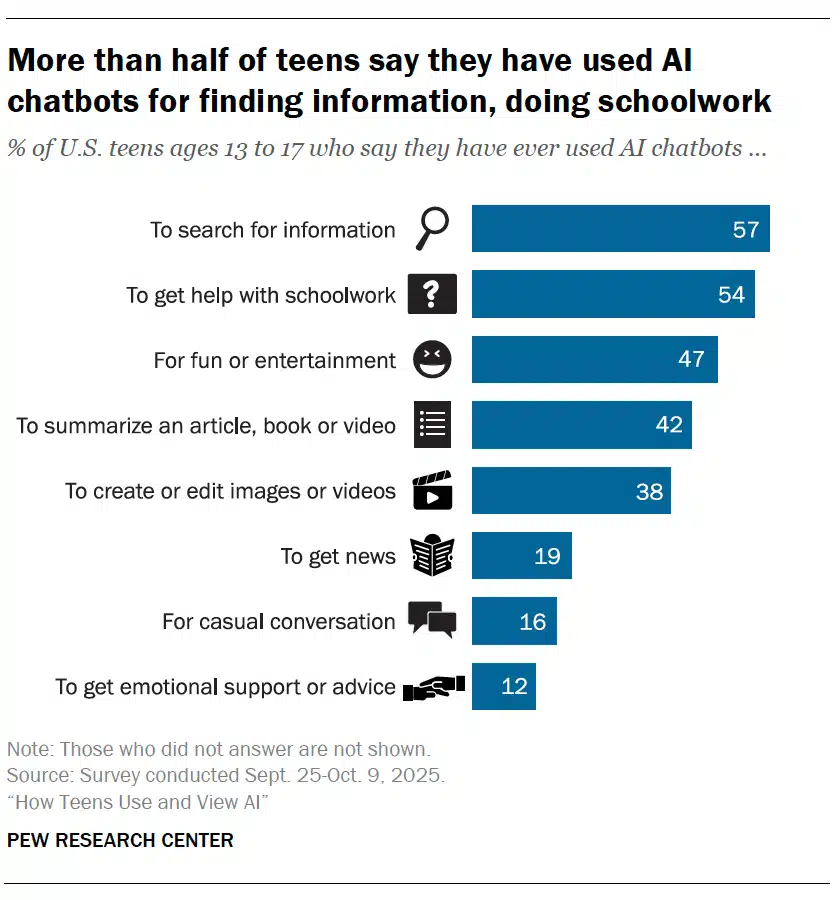

The report shows that AI chatbots are most commonly used for practical purposes. More than half of teens say they have used them to search for information or help with schoolwork, while almost half report using them for entertainment. This reflects a shift already visible in classrooms – AI isn’t a novelty students try once any more – for some it’s their regular academic support. Around one in ten teens say they complete all or most of their schoolwork with help from chatbots, while many others use them for at least part of their assignments.

Importantly most students who use AI for school say it helps. About half describe chatbots as ‘extremely’, ‘very’ or ‘somewhat helpful’ for completing their work. For schools this suggests the debate about whether students should use AI has largely passed – the more urgent question is how schools guide that use so that it supports learning rather than replaces it.

The same research highlights how quickly norms around AI in education are shifting. Nearly six in ten teens believe using AI to cheat happens at least sometimes in their school and a third think it occurs frequently. The perception matters even more than the behaviour itself. When young people assume misuse is widespread important ethical boundaries erode. For teachers it suggests that discussions about academic integrity must now include AI explicitly rather than treating it as an emerging issue.

Beyond schoolwork teens are using AI to summarise content, generate media and obtain news. About one in five say they use chatbots to get news updates, revealing that AI is already shaping how some young people understand the world.

More concerning is the small but significant minority turning to AI in personal ways. Around 16% report using chatbots for casual conversation, and 12% say they have sought emotional support or advice from them. Those figures are far from a majority but they perhaps signal a growing trend – AI beginning to function not just as a tool but as a social support. For those in safeguarding it raises familiar questions about dependency, misinformation and the absence of accountable support structures.

Despite widespread adult anxiety about artificial intelligence teenagers themselves are generally more positive than negative about its impact on their own lives – around a third expect AI to benefit them personally in the long term, while far fewer anticipate harm. However views are more mixed when considering society as a whole – concerns about overreliance, job loss and misinformation appear frequently in their responses.

This dual perspective is important for schools and parents, young people may see AI as helpful in their daily lives while still recognising broader risks. Supporting them requires nuance rather than blanket reassurance or alarmism.

One of the clearest demographic differences in the research concerns gender. Boys and girls report using chatbots at broadly similar rates and for similar purposes but their attitudes diverge when asked about AI’s future impact. Teen boys are notably more optimistic, they’re more likely than girls to believe AI will have a positive effect on their own lives and on society more broadly. They’re also more inclined to think AI will outperform humans in certain tasks, including teaching skills, providing customer service or driving.

For schools this difference may signal varying levels of enthusiasm, confidence or critical scepticism among students. For parents it highlights that attitudes toward AI are shaped not just by exposure but by social and psychological factors that influence how young people interpret new technologies.

Perhaps one of the most striking findings concerns the gap between parents’ perceptions and teens’ behaviour. While about half of parents believe their child uses AI chatbots, the proportion of teens who say they actually use them is significantly higher. In addition, many parents report uncertainty about their teen’s use of AI tools. Worryingly, this mirrors earlier patterns seen with social media – adults often realise the scale of adoption only after behaviours are well established. For those in safeguarding it reinforces a need for proactive engagement rather than reactive guidance.

The study also finds that a substantial minority of parents have never discussed AI use with their teenager. Given how quickly these tools are becoming embedded in education and online life that gap in communication could become a major vulnerability – without adult guidance and support teens are left to develop norms and expectations from peers or the technology itself.

For those in the UK, the study arrives at a moment when regulators and safeguarding bodies are already rethinking children’s online protections. Ofcom’s children’s media literacy work and the implementation of the Online Safety Act both signal a move toward expecting platforms to build safety into design rather than respond after harm occurs. For schools it means AI can’t sit outside existing digital safety conversations, if chatbots are already influencing how young people learn, seek information and sometimes look for support, then AI literacy and risk awareness need to be treated as core elements of safeguarding practice rather than optional add-ons.

Pew’s findings show that AI has already crossed the threshold from emerging technology to an everyday part of teenage life. Young people are using it to learn, create, explore information and occasionally seek personal support. For parents and schools it means the focus should move away from whether teens will use AI and toward how they use it, what they believe about it and where guidance is needed.

Schools may need clearer policies that address both learning opportunities and ethical boundaries. Parents may need support understanding what these tools actually do and how they influence their children’s decisions. Those in safeguarding professionals may need to treat AI as part of the digital risk environment rather than an optional add-on.

Above all the research suggests that young people are adapting to AI rather faster than the adults around them. Ensuring they do so safely will depend less on restricting access and more on equipping families, schools and communities to engage with the technology realistically and early.